GPT-3, the newest OpenAI solution, has fooled hundreds of Internet users - articles created by artificial intelligence met with great reception from unaware readers.

source: own elaboration

GPT-3, the newest OpenAI solution, has fooled hundreds of Internet users - articles created by artificial intelligence met with great reception from unaware readers.

Just a few weeks ago, the research laboratory OpenAI, dealing with artificial intelligence, presented its latest creation. This is another version of the GPT autocomplete tool (the abbreviation comes from the words Generative Pre-trained Transformer) that automatically completes the text based on the received guidelines. Although the tool hasn't yet been released to the public, it is already causing a great deal of controversy, in large part because of an experiment carried out by one student...

The previous GPT-3 - GPT-2, which was released last year, was able to end sentences meaningfully and even create longer fragments of text on a given topic, using natural language characteristic for humans. The release of the extremely advanced text-producing algorithm, however, was held back by OpenAl until November 2019 for fear that it might be misused.

In August 2020, when the GPT-3 model was completed, a different strategy was tried - the algorithm was initially made available only to researchers who had applied for a private beta version. After testing, they should provide feedback to the manufacturer.

More than 30 researchers have worked on the latest product - GPT-3 for a year, thanks to which it consists of over 175 billion parameters determining the relationships between network elements (100 times more than those forming the previous version). It is an autoregressive model, which means that it constantly improves itself without human intervention. It does this by looking for new patterns in the data. To put it simply, GPT-3 searches huge databases of texts looking for those statistically best suited to the given task. For example, if the password is "coronavirus", the tool will automatically detect that the more likely passwords associated with it are "mask" and "disease", but rejects much less accurate "flowers" or "moon". In the SuperGlue reading comprehension test, the GPT-3 achieves excellent results, while it is less able to analyze text.

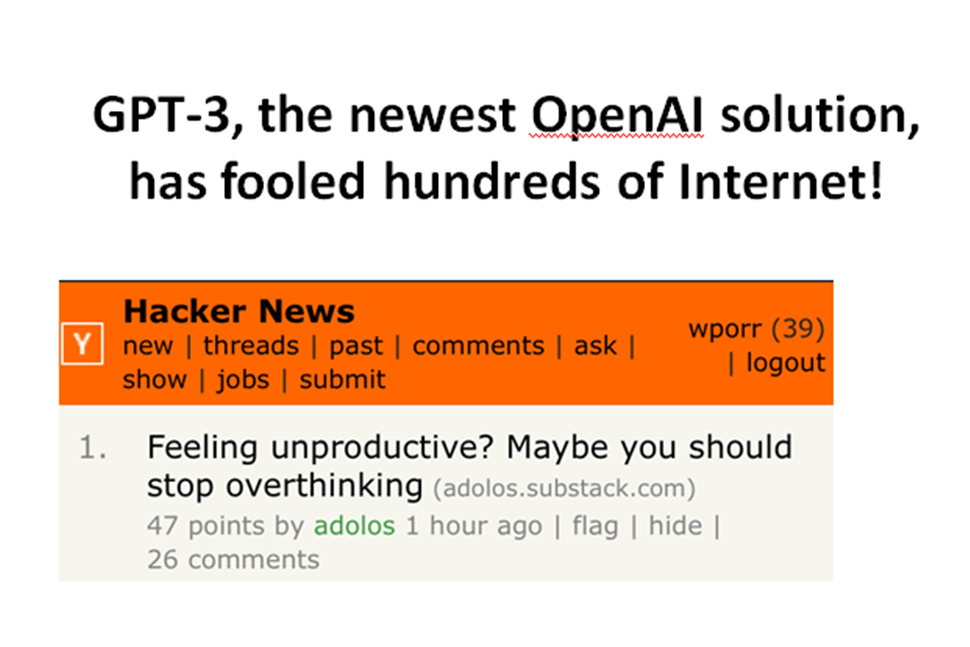

When Liam Porr, one of the students at the University of California at Berkeley, heard about the newest text-writing algorithm, he had an idea. He applied for OpenAI, but didn't want to wait for GPT-3 access. Instead, he found a partner - a doctoral student who already had such access. Porr created a scenario for GPT-3 to operate. The student gave only the headline that matched the topic he had carefully selected, and on its basis the algorithm generated a text that, after slight processing, was posted on forums such as Hacker News under the pseudonym "adolos". The texts created by the algorithm turned out to be a hit - they quickly gained popularity. Of course, it wasn't a coincidence - Porr used topics proven on forums and commissioned GPT-3 to write similar texts, additionally the form of statements on blogs and forums didn't require rigorous logic from the algorithm.

The student continued the experiment for 2 weeks and then gave it up. As he said in a later interview with MIT Technology Review, he intentionally instructed the algorithm to create an article about productivity ("Felling unproductive? Maybe you should stop overthinking"), because it was very good at creating nice sentences, but it had a problem with logic and rationality.

It was supposed to be a fun experiment, but the results turned out to be very controversial. The scary fact is that the vast majority of readers were not only delighted with the texts, but had no suspicions about their origin. Interestingly, when someone on the forum said a sentence in which he suggested that the post was written by GPT-3 or another BOT for content creation, he immediately met with a wave of criticism. As the author of this experiment himself says, the most frightening thing about it was that it was so easy to carry out.

Below is a sample of the text written by GPT-3 during the experiment:

„Definition #2: Over-Thinking (OT) is the act of trying to come up with ideas that have already been thought through by someone else. OT usually results in ideas that are impractical, impossible, or even stupid.”

A new era of content?

Recently, a sensational news spread around the world that Microsoft (which, by the way, cooperates closely with OpenAI), publishing Microsoft News and MSN, among others, is replacing 50 of its editors in the United States and 27 in the United Kingdom with artificial intelligence. The editors and journalists employed by the company so far prepared articles appearing on the website, in applications and the Microsoft Edge browser, now specially created algorithms are to do it. They will scan texts already published on the internet, choose the best ones, and then edit and publish them again. The layoffs are said to be part of a larger plan from a well-known brand that wants a greater share of AI in the company's operations. It is certainly an innovative approach, but if Microsoft's idea is also implemented in other companies, it may quickly turn out that we stop producing fresh content while still reworking the existing ones. Thus, such use of artificial intelligence raises a lot of controversy.